Search has always evolved in distinct paradigms. We moved from lexical keyword matching (TF-IDF) to semantic understanding (Learning-to-Rank), and most recently, to Retrieval-Augmented Generation (RAG), which uses LLMs to contextualize retrieved documents.

However, anyone using current RAG systems for deep research hits a hard ceiling quickly. While excellent at fact lookup, modern pipelines struggle with multi-step reasoning, resolving conflicting evidence, and orchestrating complex calculations.

A recent paper, Towards AI Search Paradigm (Li et al., 2025), provides a compelling blueprint for breaking through this ceiling. It argues that the future isn’t just better retrieval; it’s about re-architecting search engines from passive fetchers into proactive, multi-agent research teams.

Here is a deep dive into why this architectural shift is necessary and how it fundamentally changes the mechanics of information seeking.

The Bottleneck: Why Linear RAG Fails at Reasoning

The fundamental limitation of current RAG implementations is linearity.

A typical RAG pipeline is a straight line: User Query → Retrieve Top-K Docs → Stuff into Context Window → LLM Generates Answer.

This works for “What is the capital of France?” It fails spectacularly for “Who was older, Emperor Wu of Han or Julius Caesar, and by how many years?”

The latter requires a non-linear workflow:

- Decompose the query into sub-questions about birth/death dates for two distinct entities.

- Retrieve information for both separately.

- Resolve potential conflicts in historical data.

- Perform a mathematical calculation (date difference).

- Synthesize the final answer.

Forcing a single LLM call to handle this entire cognitive load in one pass often leads to hallucination or superficial answers. The AI Search Paradigm argues that to solve complex tasks, we must break the linear chain.

The Solution: Decoupling “Thinking” from “Doing”

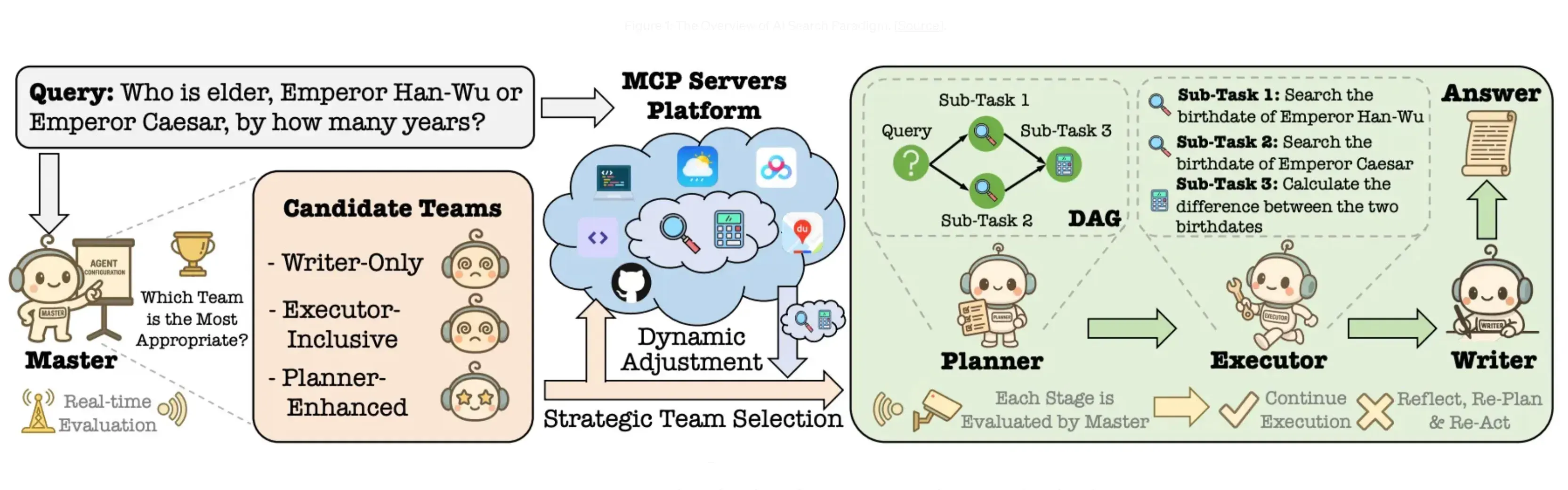

If a single monolithic model struggles with complex reasoning, the solution is modularity. The paper proposes a four-agent architecture that mimics a human research team, decoupling cognitive functions to prevent overload.

Rather than one overworked LLM trying to do everything, responsibilities are split:

- The Master (The Brain): It doesn’t do the work; it understands the problem. It analyzes query complexity and assigns roles, acting as the central orchestrator.

- The Planner (The Architect): It maps out how to solve the problem, breaking it into dependent sub-tasks.

- The Executor (The Hands): It performs the specific actions—invoking search tools, running code, or consulting databases.

- The Writer (The Scribe): It takes the raw results and synthesizes them into a coherent, human-readable response.

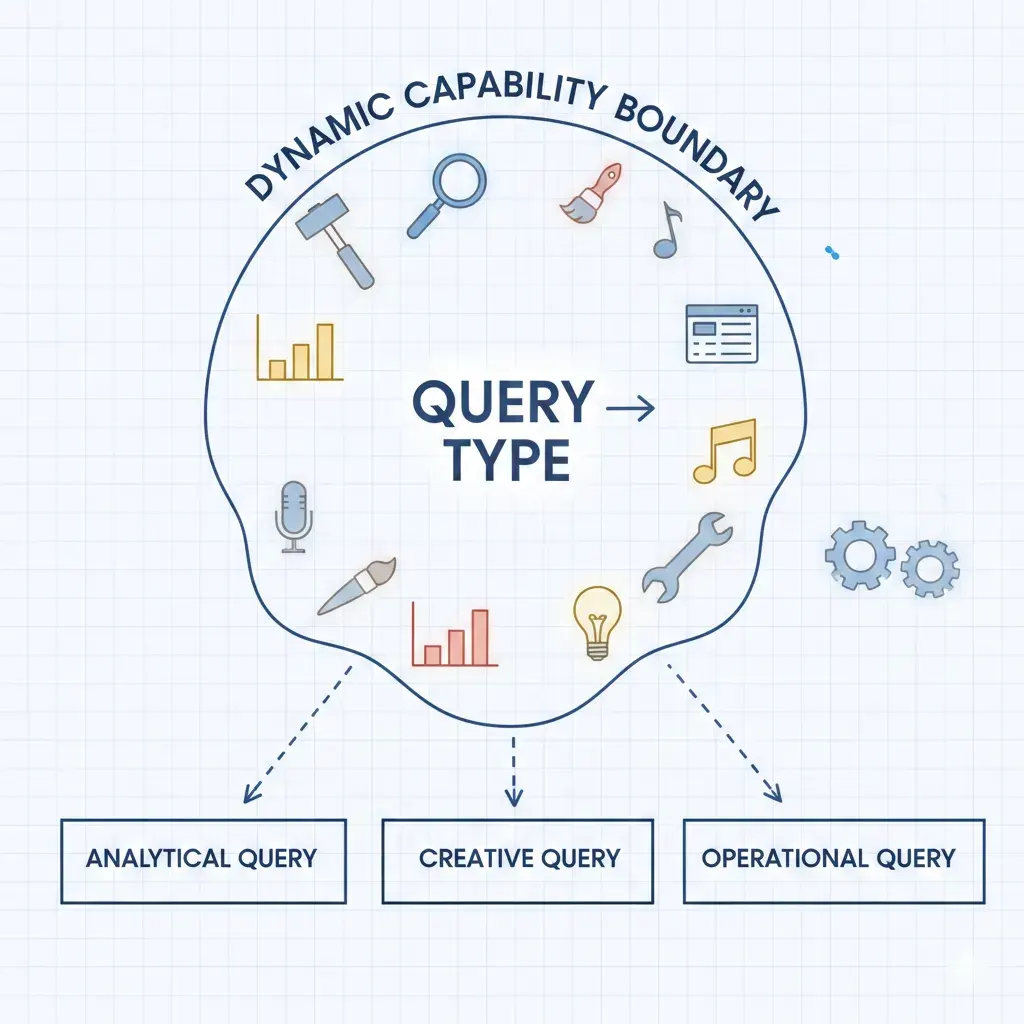

By specializing agents, the system can scale its capabilities based on the difficulty of the query, using a “Dynamic Capability Boundary” to deploy only the necessary tools for the job.

Figure 1: The system dynamically adjusts its tool usage based on query complexity, rather than forcing a one-size-fits-all approach.

Figure 1: The system dynamically adjusts its tool usage based on query complexity, rather than forcing a one-size-fits-all approach.

The Mechanics: Why Graphs Beat Chains

Moving from a single agent to multiple agents requires a new way to manage tasks. This is where the paper’s most significant technical argument lies: moving from linear chains to Directed Acyclic Graphs (DAGs).

1. Non-Linear Task Planning

Real research involves rabbit holes, backtracking, and parallel investigation. A linear chain cannot model this. The “Planner” agent builds a global DAG that captures dependencies between sub-tasks.

This allows for layer-wise parallelism. If the system needs to research Caesar and Emperor Wu, it can do both simultaneously rather than waiting for one chain to finish before starting the next.

Figure 2: A visual comparison shows how Vanilla RAG and even ReAct frameworks rely on linear steps, whereas the AI Search Paradigm utilizes branchy, parallelizable graph structures.

Figure 2: A visual comparison shows how Vanilla RAG and even ReAct frameworks rely on linear steps, whereas the AI Search Paradigm utilizes branchy, parallelizable graph structures.

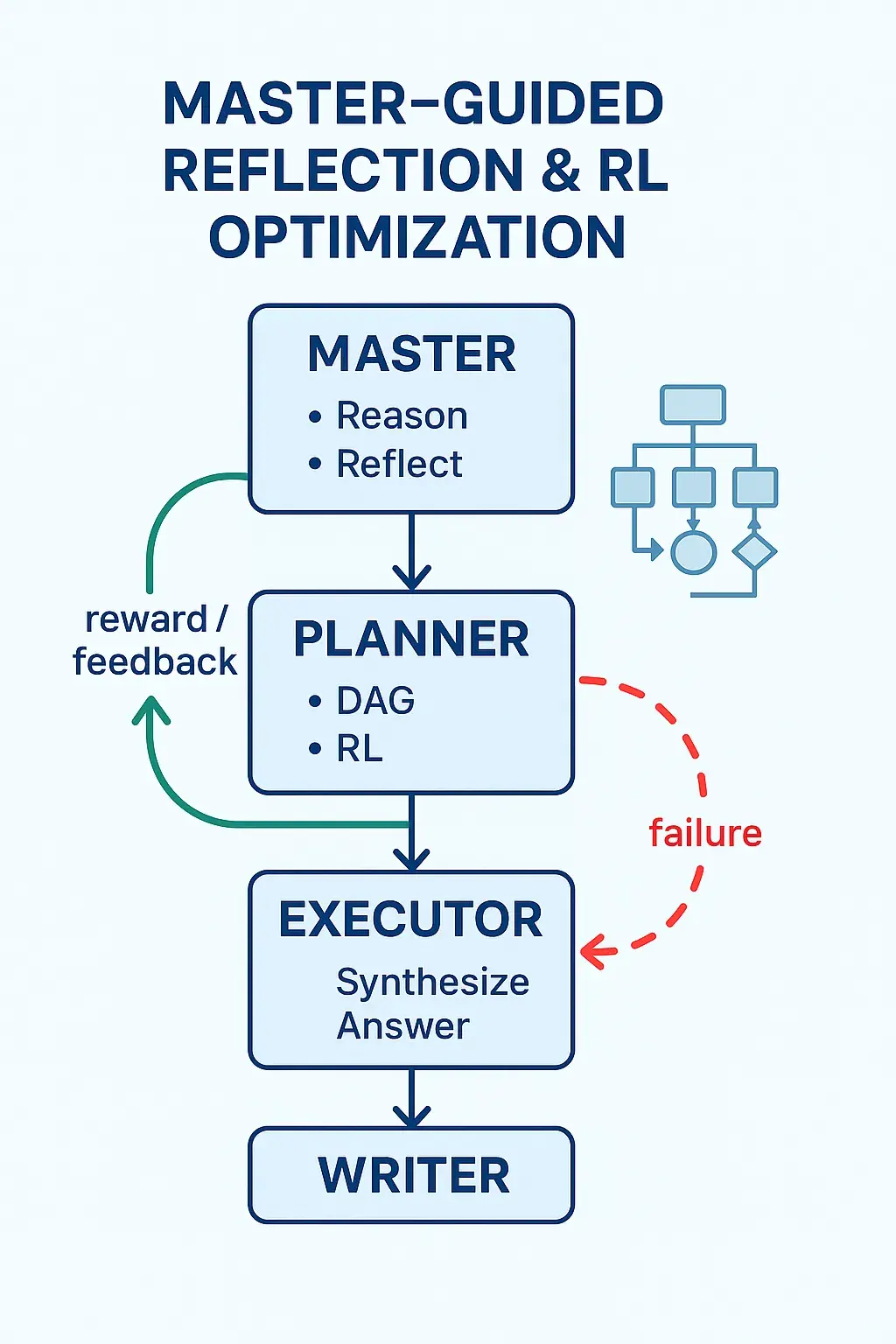

2. The Missing Link: Reflection and Re-planning

Perhaps the most critical weakness of current RAG systems is their “fire-and-forget” nature. If the retrieval step fetches bad data, the generation step produces a bad answer.

The AI Search Paradigm introduces a closed-loop feedback mechanism. The “Master” agent doesn’t just dispatch tasks; it reflects on the output. If an execution fails or returns contradictory data, the Master triggers a re-planning phase, guiding the Planner to adjust the DAG.

This transforms the system from a passive “retrieve-then-generate” pipeline into a proactive “reason, plan, execute, and re-plan” engine.

Figure 3: The Master agent acts as a supervisor, reviewing outcomes and forcing the Planner to adjust its strategy when failures occur.

Figure 3: The Master agent acts as a supervisor, reviewing outcomes and forcing the Planner to adjust its strategy when failures occur.

The Landscape: A Conceptual North Star

How does this theoretical framework stack up against today’s market leaders?

Current offerings like Perplexity, Bing GPT, and Google AI Overviews represent intermediate steps. They have excelled at integrating LLMs with search indices and improving citation transparency. However, they fundamentally remain “search wrappers.” They are incredibly efficient retrievers, but they often mask a lack of deep reasoning with a density of citations.

The AI Search Paradigm serves as a conceptual north star for where these products must evolve. It moves the goalposts from “search as retrieval” to “search as reasoning.”

The Reality Check: The Cost of Intelligence

While this multi-agent, DAG-based future is compelling, it faces significant hurdles before mass adoption:

- Latency and Cost: A linear RAG call takes seconds and costs fractions of a cent. A multi-agent loop involving planning, parallel execution, reflection, and re-planning is compute-intensive. Is the average user willing to wait 30 seconds and pay significantly more for a deeply reasoned answer?

- Complexity vs. Reliability: Maintaining a complex orchestration of four agents, tool clustering, and reinforcement learning optimization is exponentially harder than managing a standard RAG pipeline. The surface area for failure increases.

Conclusion

The AI Search Paradigm paper is more than just another technical proposal; it is an argument that the current trajectory of simply adding bigger LLMs to existing search indices has diminishing returns.

To realize the promise of a true “answer engine,” we must move beyond linear chains and embrace architectures designed for complex, non-linear reasoning. By combining dynamic tool use, DAG-based planning, and multi-agent reflection, this paradigm sketches the necessary path toward adaptive and trustworthy AI-driven search.

Reference

Li, Yuchen, et al. Towards AI Search Paradigm. arXiv preprint arXiv:2506.17188, 2025. Link to paper